Article summary

Working with some new customers this year has given me fresh perspective on how confusing the terminology of the testing world can be. I think terms and definitions matters a lot, and not just because I’m a recovering academic. Several times recently I’ve found myself in a conversation taking a position in apparent opposition to my companion, only to eventually determine that we were in fact talking about the same thing. Or we might have been using the same words, but actually meaning very different things. I don’t know that the testing world is any more inconsistent in their terms than other technical fields, but it sure seems like it sometimes.

The terms I prefer (and generally what Atomic developers use as well, though I’m sure we have some internal variation) are useful in the context of an agile development process. They’re also meaningful in more traditional testing cultures, though it’s quite likely that dedicated testers or “QA engineers” are performing the testing.

I have a simple taxonomy for the sorts of tests that developers create: unit, integration, and system. My friend and former colleague Paul Jorgensen taught this to me years ago and it’s worked quite well.

Unit Tests

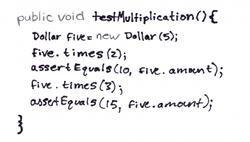

Tests which validate that a single method or function performs as expected. These tests are almost always “state-based” testing, meaning they make assertions on the return value of a unit, or the change of state in the system.

Example from Ken Beck’s Test-Driven Development

Integration Tests

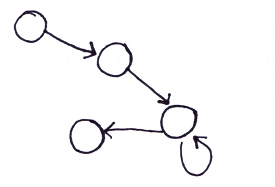

Tests which validate that multiple units or subsystems play well together. Software systems are the composition and integration of many small pieces of functionality. Integration tests may be state-based or interaction-based. Mocking libraries are often used for integration tests.

System Tests

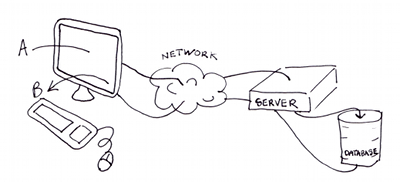

Tests which validate that the system as a whole, from the perspective of the “end-user”, operates correctly. I put “end-user” in quotes, since that might mean other systems or hardware, depending on the project.

The unusual breadth of Atomic’s range of projects gives me a lot of examples to draw upon. To make the definitions above more concrete, I’ve included examples from web, desktop and embedded applications.

Examples

Unit Test Examples

A function calculates the square of a floating point argument. A test for this function might include assertions that it returns 4.0 when given 2.0, 0.0 when given 0.0, 81.0 when given -9.0, etc.

A Ruby on Rails web app would have unit tests that confirm that the Model classes behave correctly.

An embedded application might have unit tests that verify computations, as above, or tests for the manipulations of registers.

Integration Test Examples

A running application written in an object-oriented language can be viewed as a series of messages sent between objects. An integration test in such an application would make assertions that the messages sent between objects were as expected (by name, frequency, parameters, etc).

Ruby on Rails supports and encourages integration tests. A Rails integration test validates that the Controller, View and Model operate properly together. This test isn’t a system test, by my definition, because it doesn’t include the client side (the browser).

System Test Examples

Desktop applications are used by people. Most often today they have graphical user interfaces. A system test of such an application exercises the app from the user’s perspective. In other words, it pushes buttons, types into text fields, and makes assertions about what’s displayed or changed in the app.

System testing web apps requires driving the application through a browser, just like an end user would. Such tests exercise the browser, Javascript, HTTP, and the full stack of the server side code.

Every embedded application interacts with the world in some fashion. A system test for a speed controller on an automated guided vehicle, for instance, might inject an analog speed signal from a programmable test rig and validate the expected digital output to a motor controller.

Other Terms

- Regression – The term “regression tests” is a common misnomer. Regression means running all your tests every time you make a change, or every time you test. There are no separate, special types of tests that are regression tests. Automation obviously helps to do regression testing, since it dramatically reduces the cost compared to manual regression testing.

- Automated Tests – Tests that can be run without any human intervention (other than starting the test runner). Applies to all levels of testing.

- Exploratory Testing – An exemplar of the context-driven school of testing, exploratory testing is a form of manual testing where the tester learns her way through the application making observations, finding bugs, and identifying interesting new test cases.

- Acceptance Tests – Tests, generally system tests, that are used as a contract between the development team and their customer. The customer agrees that the development team has satisfied the contract when the acceptance tests run clean. Acceptance tests are generally system-level tests and should probably be tied closely to requirements.

- Functional Tests – A synonym for system tests. Also a term used in the Rails community for integration tests that validate the interaction between Controller and View, isolated from the Model.

- Non-functional tests (My personal favorite.) Doesn’t refer to broken tests, just those that validate aspects of the product which have nothing to do with application functionality, such as installation, performance, upgradability, etc.

Interesting article. I have done a similar article here

http://steveo1967.blogspot.com/2010/01/testing-terminology-definition-or.html

I like the point you make about it is may not just be the testing profession that has trouble with terminology.

Hello Carl.

Personally, it pleases me that the test community doesn’t agree on terms. If we did, there would be no room for discussion, debate, and improvement – the matter would be “settled.” ( http://www.context-driven-testing.com/ )

The test standardization folks (ISTQB) would have us all use the same terms in order to eliminate ‘friction’, but I think there is value in fighting through labels to find the actual meaning, to see how we do things differently and see if we can learn from each other. I don’t mind the friction.

That said, I /do/ think it’s helpful for people to say “when I use these terms, this is what I mean”, which is what you’ve done here, and I can appreciate that. Thank you.

Thanks for the link, John. It’s a confusing world indeed. Looks like we have a mutual friend. I wonder what Michael would say about our terms.

Hi Matt

It would be nice if the confusion and inconsistency around terms was indicative of the good stuff you point out — I agree the world needs more of that.

I wonder if you can have it both ways: consistent terms and efficient communication at the same time you’re learning, changing practices and innovating?