Article summary

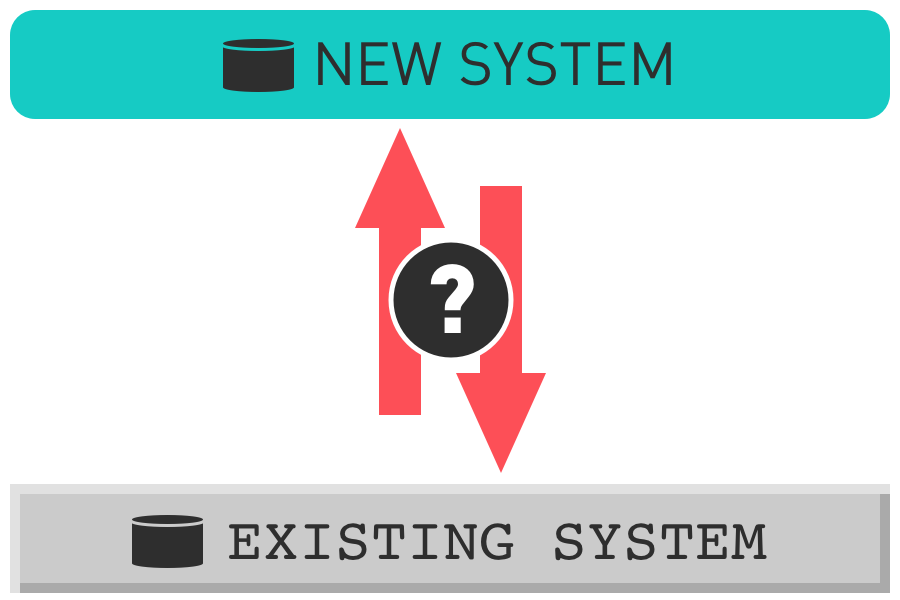

The Agile principle of delivering value early and often is critical when replacing large, existing software systems. To achieve that goal, we want to be able to use both systems in parallel, or at least parts of the systems. And to achieve that goal in a rewrite situation we often need to share data between the two systems. Over the course of this three-part series, we’ll look at a number of strategies to do this effectively.

First, I want to address a little terminology. Managing the scope of potential releases is key to providing value early and often, and I’d like to create a little shorthand to talk about that scope throughout this post. In order to avoid getting into arguments over a certain other acronym (MVP), I’m going to invent my own for the scope of this blog post:

Viable Releasable Increment (VRI) – The set of features that could be released together to satisfy the needs of users and the selected data integration strategy.

Approaches to One-Way Integration

In this post, I’ll address one-way integration. Loading data in one direction can be a good approach to feed read-only features of either system. Or it can be used to keep the existing system up-to-date as an emergency fallback should the new system become unusable. It’s typically less costly to build integration in only one direction but comes with some significant constraints.

1. One-time load & switch

Load the production data for the segment that you’re replacing once, and then require all future work to happen in the new system. Ideally, you can disable the part of the old system that you’re replacing to prevent accidental use.

Benefits

- It’s generally less expensive to load the data once vs. repeatedly.

- It decouples the new and old systems immediately allowing more freedom to expand the new system’s capabilities.

Risks

- Falling back to the old system may take longer and involve a significant amount of manual work (data entry, re-enabling part of old system, etc.).

- The size of the VRI may be larger than other strategies because it must support all functions for the loaded data set.

When to use it

- When there is a set of data relatively independent from the rest of the system with a reasonably small feature surface area, so the size of the VRI seems reasonable.

- When tooling to support other strategies would take significant time compared to the expected time to build the VRI.

- When significant differences in data models would make other strategies cost-prohibitive.

2. Scheduled batch loads

Load the production data from one system into the other on a regular basis (e.g., nightly, hourly, etc.). This could flow either direction, old-to-new or new-to-old, depending on which system is making edits to the data. Note that the VRI for new-to-old loading will be larger than for old-to-new.

Benefits

- It enables some use of both systems in parallel, with the caveat that the system receiving the batch loads should be read-only for the batch-loaded data.

- If loading new-to-old, the old system has correct data to serve as a fallback.

- It’s typically less costly than building event-based integrations.

Risks

- If loading old-to-new: Users will get reduced value, and the team will get less valuable feedback if the new system is read-only for a feature set built to write that data.

- If loading new-to-old: Data in the old system could be corrupted if something goes wrong with the batch load, affecting its ability to serve as a fallback.

- Waiting for data loads could be frustrating for users.

When to use it

- When the size of the VRI to support loading new-to-old is reasonable, and the risk of impacting data in the old system can be limited (e.g., only load new data instead of blowing the whole data set away and re-creating it).

- When the feature set in the new system is read-only anyway, so loading old-to-new doesn’t pose any real limitations on use in the new system (e.g., reporting, viewing, or extracting data).

3. Event-based integration (near real-time)

This type of integration involves near-real-time syncing of data from one system to the other triggered by events that change data in the system (e.g., user editing data via a web app, integration with another system, scheduled processes, etc.).

Benefits

- Users spend less time waiting for data to show up.

- If syncing new-to-old: The old system is more readily available to serve as a backup.

Risks

- One system is still read-only for this data, so it carries some of the same risks as scheduled batch loads in that regard.

- It’s more complex to build than batch/bulk processes. Some real examples of added complexity from recent work include deduplication of events, handling out-of-order events (detail record created before header record), and data changing between event recording and work in progress.

- If loading old-to-new: You may be at the mercy of the old system’s quirks when it comes to emitting events.

When to use it

- When the size of the VRI to support loading new-to-old is reasonable and it’s important to keep the data in the old system current (tolerance of minutes behind vs. hours) to support other processes or use it as a fallback.

- When the feature set in the new system is read-only, but the data needs to be more current than a scheduled batch load could support.

Closing Thoughts

There are other possible solutions in the gray area between scheduled batch loads and real-time, event-based integration. The specifics of a project may lead you to something in between.

We’ve used one-way integration as a stepping stone to validate partial system functionality in production and reduce rollout risk. We rolled out a real-time integration with only one direction enabled, asked users to review the new UIs in read-only mode, and monitored the integration closely for a few days to build confidence before turning on the full two-way integration.

This is part one of a three-part series on Strategies for Data Synchronization for Rewrite Projects: