Article summary

When replacing large existing software systems, we need to be able to use both systems in parallel, or at least parts of the systems. And to achieve that goal, we often need to share data between the two systems. In this three-part series, we’ll look at a number of strategies that you can use.

This is Part 2 in the series. You can read Part 1 if you want to catch up.

As a quick recap, I’m using the following shorthand as a way to talk about scope in this series:

Viable Releasable Increment (VRI) – The set of features that could be released together in order to satisfy the needs of users and the selected data integration strategy.

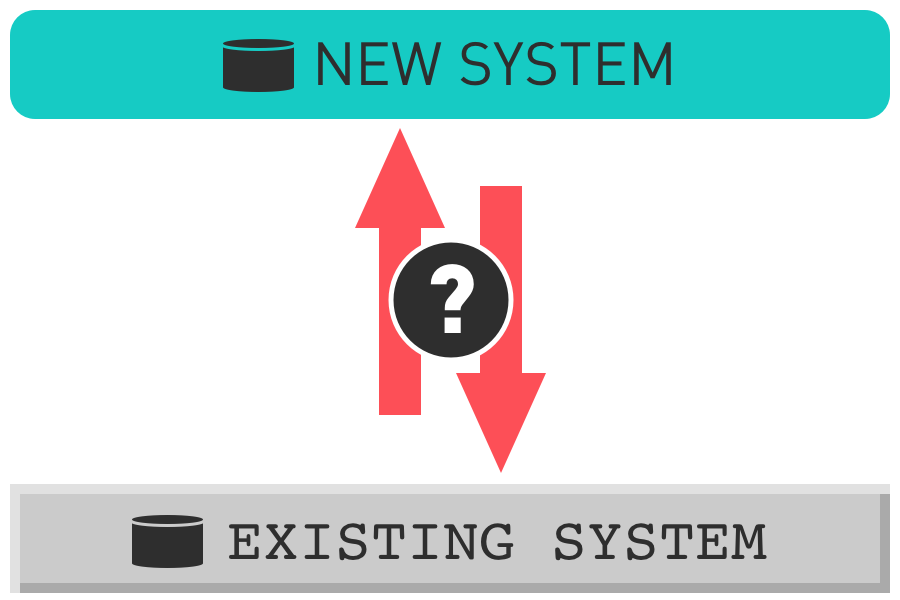

Two Approaches to Two-Way Integration

Two-way integration builds on the strategies I shared in Part 1 and expands on the value that integration can provide. This is the ultimate solution for using an existing and new system in parallel. It allows for fast value delivery and risk reduction by keeping the systems in sync.

1. Event-based integration (near-real-time)

This is near-real-time syncing of data between both systems, triggered by events that change data in either system (e.g., user editing data via a web app, integration with another system, scheduled processes, etc.).

Benefits

- Data is up-to-date in both systems with minimal lag time.

- It enables work to happen in either application on short notice.

- It reduces potential VRI size because both systems can edit and read data, so user workflows and willingness to work in multiple tools become the primary limiting factors.

Risks

- Problems with the integration could harm data in both systems.

- This solution is more complex than other approaches.

When to use it

- The data model and storage of the old system don’t meet the needs of the new system.

- The smaller VRI size will result in meaningful gains for fast value delivery, de-risking production releases and letting stakeholders control how fast and how much to build.

2. Continue to use the existing data model and storage

In this approach, you build a new application on top of the existing data model and storage so that the new and old system share it.

Benefits

- Data is always up-to-date in both systems.

- It’s less costly than building an integration since less tooling is built to synchronize data between separate systems.

Risks

- There may be technical challenges working with the existing system’s data storage tools.

- There may be performance challenges working with the existing system’s data model.

- The data storage may not scale well and may need to be replaced after the new application is built.

- Sticking with the existing system’s data model will limit divergence between the applications–the new system will have to be more like the old one than it is with other approaches.

When to use it

- The data storage is relatively modern and provides reasonable means of access, e.g., SQL Server 2005 is a little out-of-date, but workable, where as Visual Fox Pro would be quite a challenge.

- The ability to quickly build a new application on top of the existing data outweighs the restrictions the team will face by sticking (mostly) to the existing data model.

- The new application is expected to be more similar to the existing system.

Closing Thoughts

There are other possible solutions in the gray area between scheduled batch loads and real-time, event-based integration. The specifics of a project may lead you to something in between.

I place a high value on short feedback cycles. As a result, I push towards solutions in this space that shrink the size of our production releases as small as I reasonably can. The time needed to build the integration is the factor that tends to push the most in the opposite direction. The larger the project is, the less of a factor cost tends to be and the more important it is to deliver incremental production releases.

Come back for the next part where we’ll discuss taking the integration further: Part 3 – No Integration.

This is part one of a three-part series on Strategies for Data Synchronization for Rewrite Projects: