I have high-frequency hearing loss. This common type of hearing loss makes the world sound muffled and can make it difficult to pick up on what people are saying. Unfortunately, as many folks with disabilities experience, the world isn’t engineered for me. But I’ve had some luck making it work in my day-to-day work and life, and AirPods have been a helpful tool.

Ski-Slope Hearing Loss

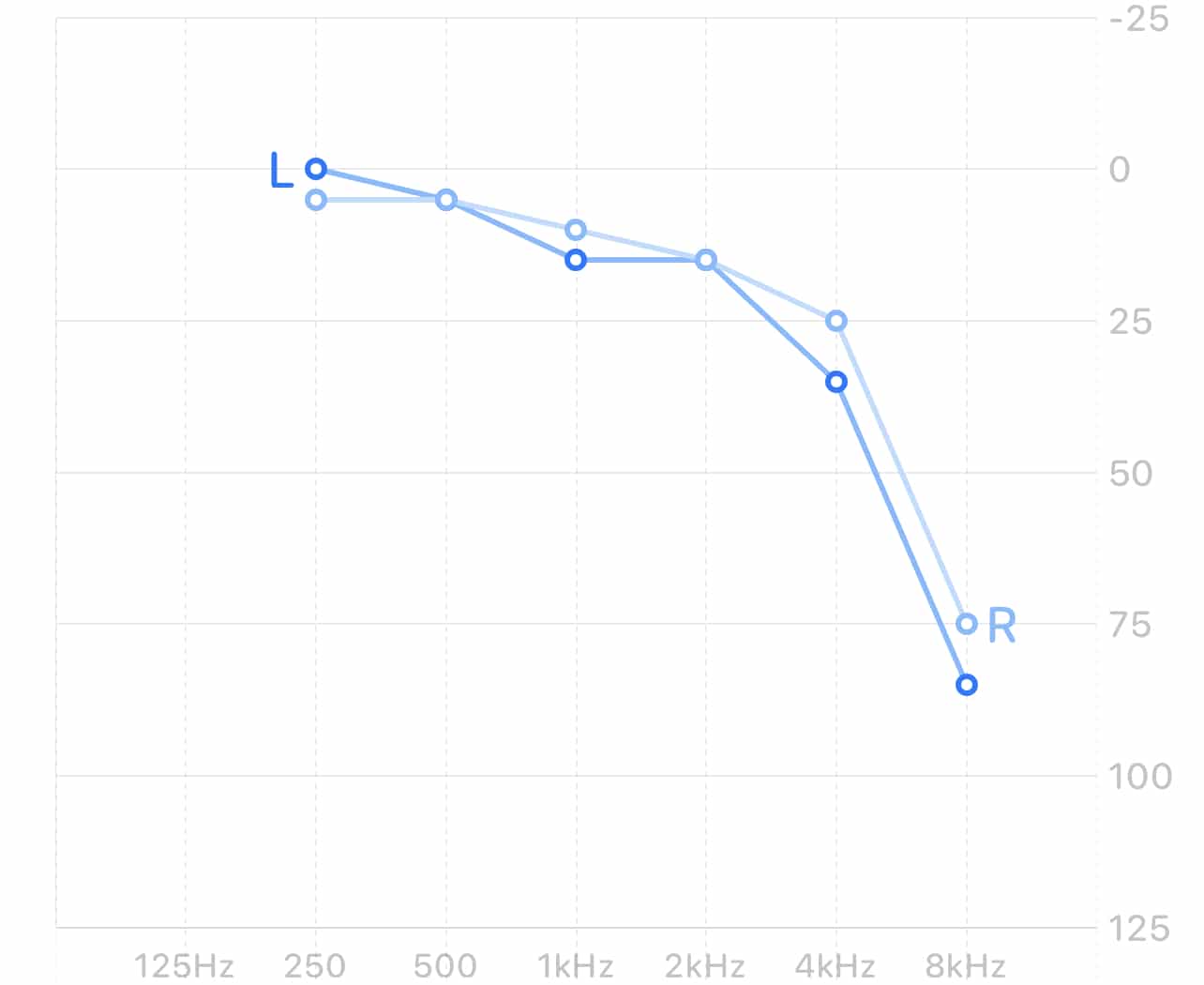

High-frequency hearing loss is sometimes called “ski-slope” hearing loss, because when plotted as an audiogram, it looks a bit like a mountain you can ski down. Mine looks like this:

The y scale on this graph is dBHL, or decibels hearing loss. The x scale is hertz, the frequency of a pure tone. Audiograms like this one are measured using carefully calibrated equipment, which plays tones at varying loudnesses. An audiologist asks the person being tested if they can hear the tones.

With this kind of hearing loss, I have the most trouble hearing sounds like “th” and “s” and sometimes “f.” Like many people who get help with their hearing loss, I got checked out when people close to me noted that I was misinterpreting them or asking them to repeat themselves often.

Hearing Aids

I wear lower-end hearing aids now, tuned by audiologists. These are designed to help me out in normal conversational situations like being in the same room with someone. They’re really good at what they do.

They came with an app for my iPhone that lets me adjust them. I can tweak their equalization or amplification. I can also select between several different modes that are designed to amplify sound uniquely for situations like crowded rooms, listening to music, and being outdoors.

They also have some really basic Bluetooth functionality. I can stream audio to them. I can, technically, listen to music from my iPhone or take a call using them. But the sound is really thin and rather quiet and could never stand up to a noisy room. In a nutshell, it’s a bonus feature.

My hearing aids are specifically for helping me with day-to-day, real-life conversations. They’re not helpful in the world of work that’s so often remote. But I have something else in my bag: AirPods Pro and AirPods Max.

AirPods and Headphone Accommodations

I originally got my AirPods Pro for listening to music on a noisy bus with their built-in noise cancellation. They also have a feature called Transparency Mode. This effectively runs the noise cancellation in reverse. The feature uses their built-in mics to let the outside audio in, so you can hear what’s going on around you.

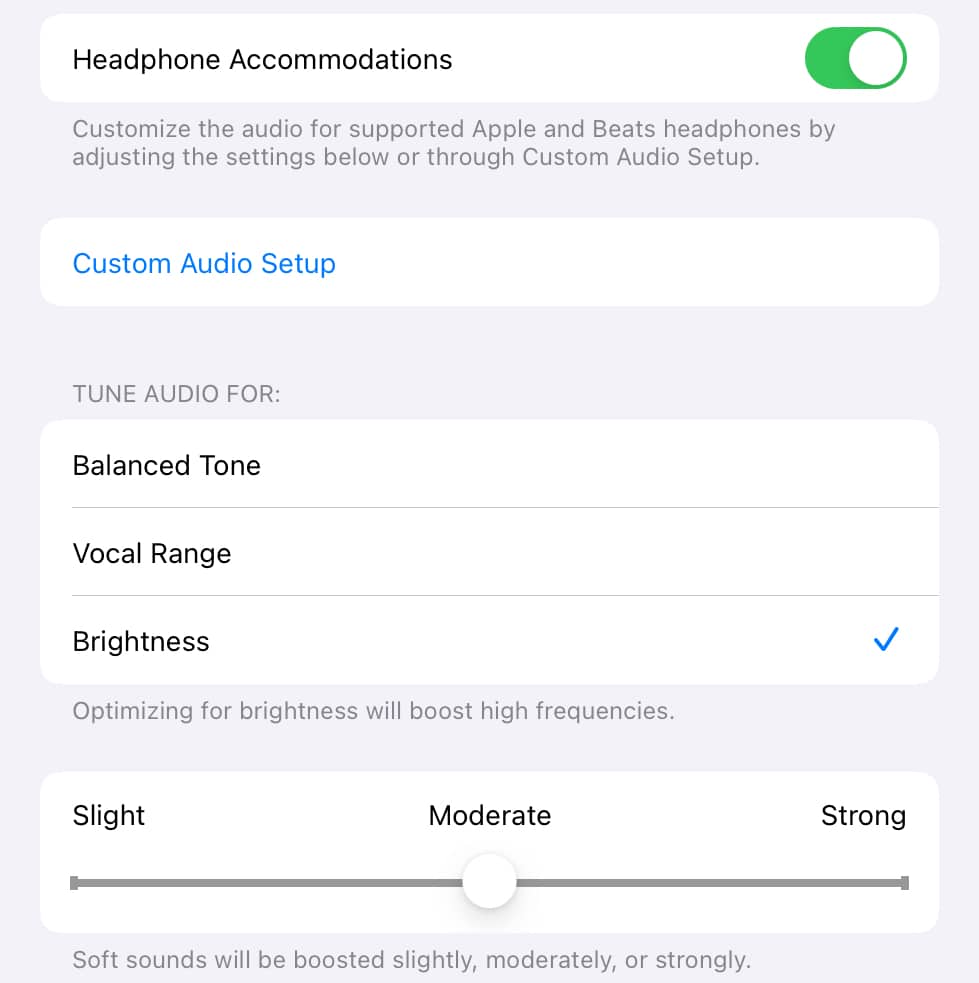

But then, during my hearing loss journey, iOS 14 came along and introduced an intriguing new accessibility feature called Headphone Accommodations.

This feature, available for several Apple headphones, can adjust the audio coming from phone calls or media to accommodate various kinds of hearing loss. With AirPods Pro, it can also apply the same adjustments to ambient audio coming in with Transparency Mode. The AirPods do this on-device; it happens with almost no latency.

I hadn’t bought my hearing aids yet at this point and hadn’t had a hearing test in a while. I wondered if this might mean I could use my AirPods as hearing aids.

Not Competing, But Complementing

The answer was, unfortunately, no. I could hear noises around my apartment much more clearly than I’d heard before, but it wasn’t doing much for conversations. The battery life wasn’t anywhere near as good as you can get out of a hearing aid, either. Four to six hours can’t stack up to more than 24.

AirPods do a great job with music, though (as you might expect). I didn’t even realize what I’d been slowly losing over the years to the hearing loss I was barely aware of. Old music I used to love came back with a clarity I’d been missing for years. New stuff I’d grown to love had a whole new dimension I’d never heard.

Calls on my iPhone, whether through the good old public telephone network or any other service, also improved. I found I didn’t need quite so much volume because the better sound profile made things much clearer.

Unfortunately, when I switched to my Mac, I lost that edge. The AirPods still improved the sound around me, but audio from the Mac itself was the same as it ever was. It’s still an improvement to have the AirPods over speakers and a mic, just because the AirPods speakers are so much closer to my eardrum. But I wish I could have that processing on the Mac, too.

My iPad is a special case. It also has Hearing Accommodations, but it can’t use my audiogram. The audiogram is stored in Apple Health, which is tied to my iPhone—presumably where my iPad can’t get to it.

The iPad does have some generic options I can use. They’re not quite as good as the audiogram, but they’re better than nothing.

Going Bigger

I finally got my hearing aids as we tentatively started to come back outside from lockdown. My AirPods Pro were helping me keep in touch with people remotely, but they weren’t doing much for people I was physically near. Hearing aids helped me hear people nearby.

Now, I had another problem, though. I needed hearing aids to communicate with other nearby people (especially if they were wearing masks) when in the office. I needed AirPods to do remote work with people far away. This all meant I needed to keep switching them, and it was a huge pain to do so.

I knew a few people who were using AirPods Max as headsets. AirPods Max also supported Headphone Accommodations. They also fit right over my ears, hearing aids and all.

With AirPods Max, I have a solution for a few different things. Used with my Mac at the office, they’re a standard headset, and my hearing aids will do a little extra processing on their sound on the way into my ear canal. Used with my iPhone elsewhere and without my hearing aids, they’re headphones and a headset tuned specifically for my hearing loss for music and calls.

AirPods Max also has its own Transparency Mode, which comes in really handy in the office. Strangely, though, it does not support sound adaptation with Headphone Accommodations like the AirPods Pro do. I’m not sure why this is. It seems like it’d be an easy thing to add, but the feature’s just not there.

A Better Experience

The iOS 14 experience was good, and the iOS 15 experience has become even better. I was able to import my clinical audiogram directly from the Settings app instead of (ab)using some third-party apps. Apple improved the sound profile quite a bit in iOS 15.1. Background Sounds can mix in quiet noise that can help with my tinnitus.

Perhaps most notably, AirPods Pro’s Transparency Mode has been improved with a number of additional options like Conversation Boost and Ambient Noise Reduction. I tested these options out and, while I wouldn’t get rid of my hearing aids, came to the conclusion that AirPods Pro were better than nothing and could help me out in a pinch.

I wish it was easier to control the various options. There’s a Control Center option for hearing devices, but I have to make sure the AirPods are connected to the iPhone through the AirPlay controls, switch devices (it defaults to controlling my hearing aids), then fiddle with the settings to get where I need. Considering that I may want to turn Headphone Accommodations on and off with the AirPods Max frequently depending on whether I’m wearing my hearing aids, I wish this was easier.

I also wish the AirPods Max had some sort of Headphone Accommodations with Transparency Mode. I would love to be able to be wearing the AirPods Max and hear the world around me more clearly.

Ultimately, though, I’m simply glad there’s so much I can do with these devices that accommodate my disability. Thanks, Apple, for paying attention to this. It’s made my life better.

Really great article! Headphone accommodation feature should come to Mac/Macbook as well. I hope they add this soon as it’s a game changer for me but only available on my iPhone.

Yes! That and iPad support for my audiogram would really make my day.