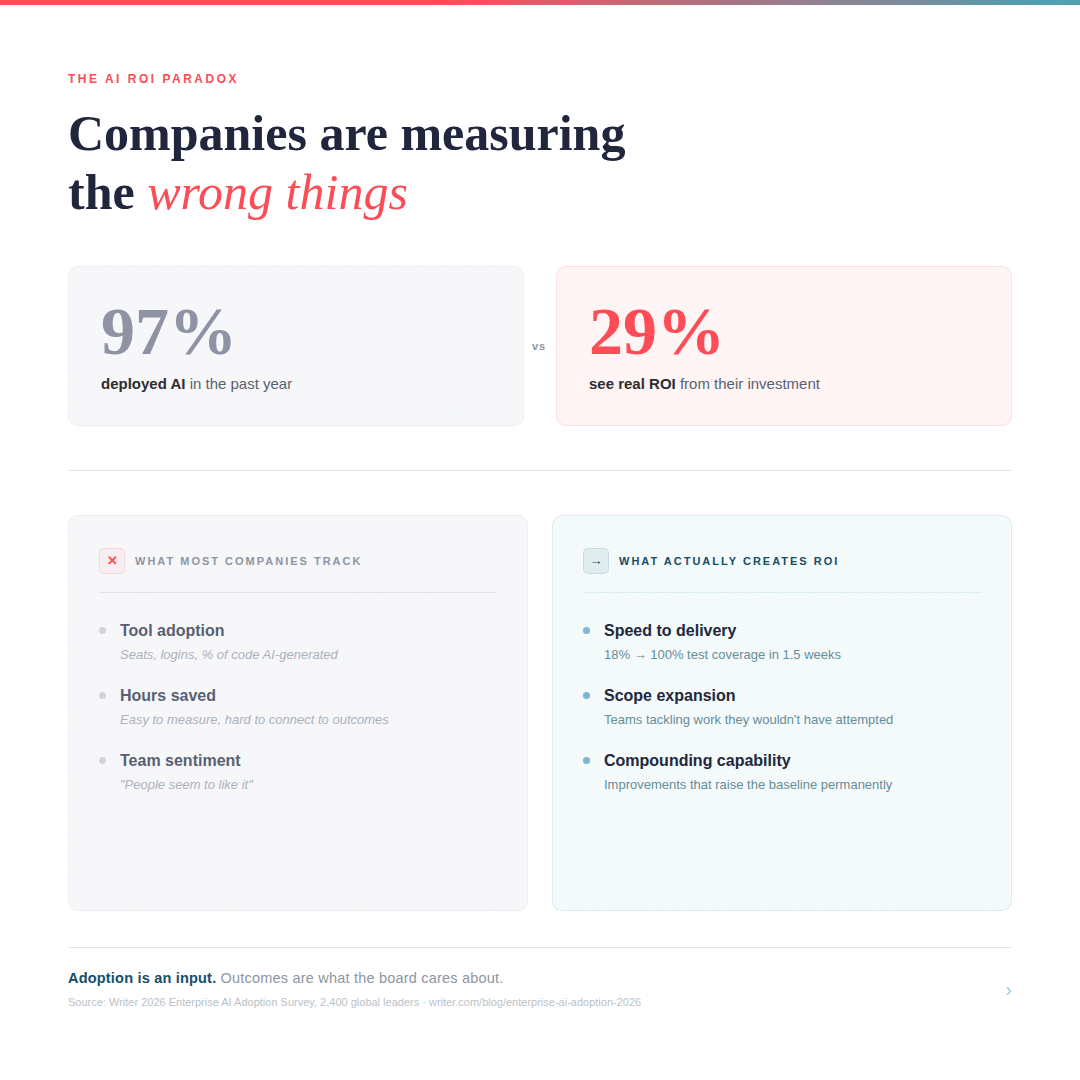

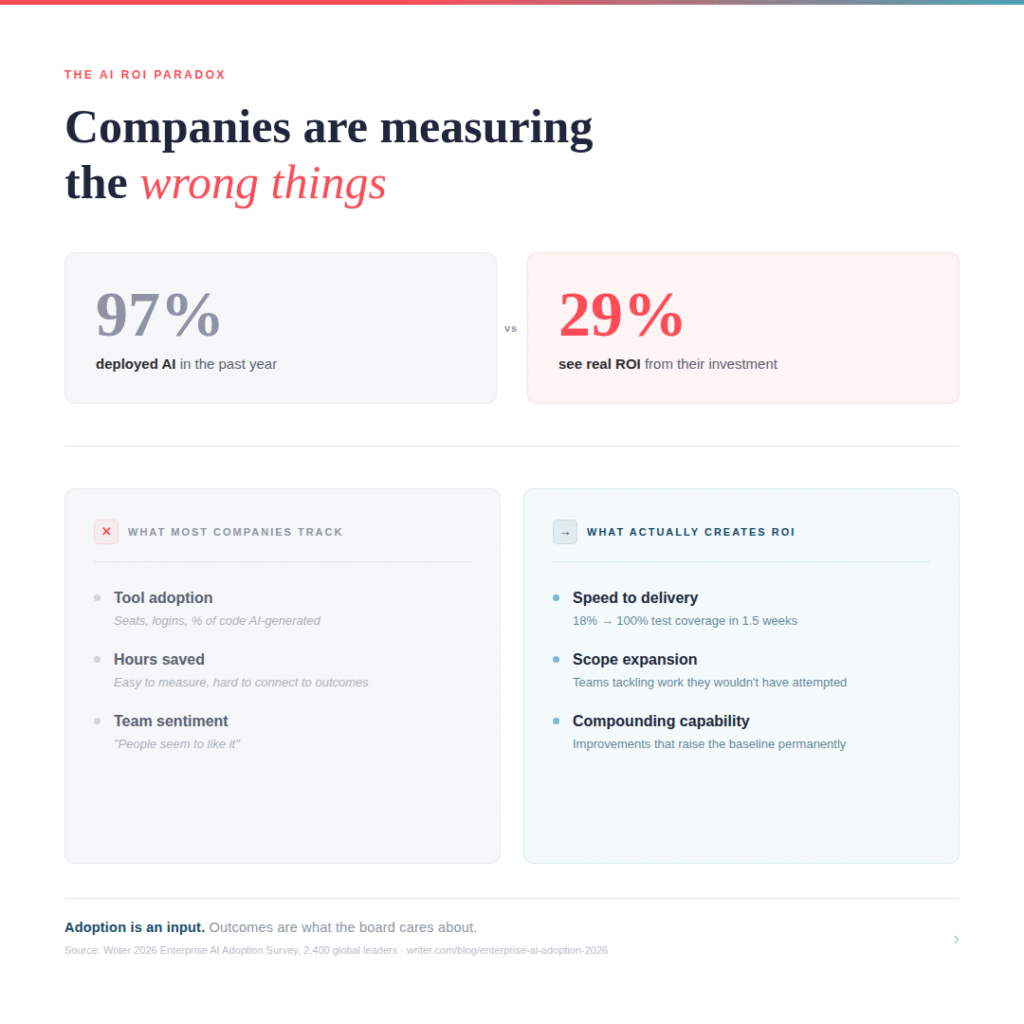

97% of executives say they’ve deployed AI in the past year.

Only 29% say they’re seeing real ROI.

(Both from Writer’s 2026 Enterprise AI Adoption survey — 2,400 global leaders)

I’ve been sitting with that gap for weeks now — not because the numbers surprise me, but because I keep watching it play out in real time across my client accounts.

Here’s the version of it I hear most often: a product leader or VP of Engineering tells me the team is faster, output is up, people are more confident. But when their CFO or board asks for hard numbers, they freeze. One client told me they needed “efficiency metrics to justify a contract extension” on an AI-assisted development engagement. The only metric they could reliably point to was hours saved.

Hours saved. That was the whole story.

I’ve started to think of “hours saved” as the vanity metric of AI adoption. It’s easy to measure, easy to present, and almost entirely disconnected from the business outcomes that leadership actually cares about. What I’ve found working across multiple accounts this year: the real value tends to land in three places — and only one of them is speed.

Speed is real, but it’s table stakes.

Yes, things are faster. One client’s team went from 18% test coverage to 100% in a week and a half using AI-assisted development for a feature. That work was previously on a months-long roadmap. Another team is targeting a 25% reduction in a debugging operation that averages 16 hours per cycle. At a different company, a recruiter sourced 70 prioritized candidates with fit rankings and personalized outreach suggestions in 15 minutes — work that would have taken 20+ hours manually (and that outside recruiters charge a nice commission to do).

Those numbers are compelling. But if speed is all you measure, you’re missing the bigger story.

Scope expansion is where the real value hides.

This is the one that keeps coming up in my conversations and that I think most companies are undervaluing. AI isn’t just making your team faster at what they already know — it’s letting them attempt work they previously wouldn’t have touched.

One client’s development team started confidently working in Kubernetes and unfamiliar algorithm domains after adopting AI coding tools. They didn’t just do the same work faster — they expanded what “our team can handle” means. That kind of capability expansion doesn’t show up in a time-saved spreadsheet. But it absolutely shows up in what you can ship, what you can bid on, and how you staff projects.

A client engineering lead framed it as three value propositions: 3-4x speed, better code quality through automated testing, and scope expansion into areas the team previously avoided. Two of those three are invisible if you’re only tracking hours.

Compounding capability is the long game.

The teams I’ve watched get the most from AI tools aren’t just faster on one project. They’re changing how they work on everything after. The skills compound. The tooling improvements build on each other. The team that figured out AI-assisted testing didn’t just ship that sprint faster — they raised the baseline for every future sprint.

This is harder to put on a slide, but it’s the difference between a one-time productivity boost and a structural advantage.

So why is the gap so big?

I think two things are happening at once.

First, most companies are measuring the wrong thing. They’re tracking tool adoption (how many seats, how often people log in, what percentage of code is AI-generated) instead of business outcomes (what shipped faster, what new capability exists, what risk got reduced). Adoption is an input. Outcomes are what the board cares about.

Second — and this one is uncomfortable — a lot of the leadership conversation isn’t happening at the right altitude. A colleague of mine recently observed that roughly 75% of the executives she meets are overwhelmed and confused by AI thought leadership. She posted on LinkedIn asking who wanted to learn the basic differences between ChatGPT, Claude, and Copilot. Fifty people responded within two hours, and fifty people hit the waitlist immediately thereafter.

If executives are still sorting out which tool does what, asking them to build an ROI framework is premature. The ROI conversation probably needs to happen after the foundation is set. Not before.

Here’s the reframe I keep coming back to.

Stop asking, “Is our AI investment paying off?” and start asking three different questions.

- How much faster (and effectively) are we delivering?

- What can we do now that we couldn’t before?

- And are those improvements compounding or one-off?

If you can answer those three, even roughly, you have a story your CFO can work with. Not because the numbers will be perfect, but because you’re finally measuring the right things.