Article summary

As many developers know, working with timestamps can be pretty terrible. But things weren’t always this way… They were worse. Clocks, and more specifically coordinated clocks are a modern invention. Getting the whole world to agree to a single, computer-friendly timekeeping system has been a pretty bumpy road.

So how did the world manage to go from following the sun to a global, coordinated timekeeping system?

Years, Months, and Days

We take our standardized calendar system for granted, with most of the world using the Gregorian calendar system for several hundred years. The Gregorian system was created in 1582, when Pope Gregory XIII reworked the Julian calendar system (created by Julius Caesar in 45 BCE).

This system was created, but not fully adopted: The US and Britain kept with the Julian system until 1752. Because the Gregorian system accounts for leap years correctly (every four years, except years divisible by 100, aside from 400), and the Julian system does not (just “every 4 years”), the calendars drifted apart from one another. By 1752, these calendars were 11 days apart.

As a result, when the US and UK switched to the Gregorian system, they observed Wednesday, September 2nd, 1752, followed by Thursday, September 14th, the next day. Rioting ensued; the people wanted their 11 days back. Other countries slowly switched to the system over the course of nearly two centuries (with Greece last, in 1923). And you thought Daylight Saving Time was bad!

Hours and Minutes

Local solar time

Timekeeping devices have been around for a very long time, from candles and sundials in 1500 BCE to clocks becoming more commonly available in the 15th Century AD and coming into mass production in the 18th Century.

As industrialization caused cities and towns to grow, people started living closer together and decided to use clocks to synchronize their schedules to the same hour and minute. As a result, towns and neighborhoods were synchronized to the same time, but each town set its own clocks to the local sunrise and sunset.

For example, Grand Rapids would set its clocks to noon when the sun was directly overhead (Grand Rapid’s “local solar time”). But local solar times can vary by four minutes for every degree of longitude. Such a system would put Atomic Object’s Grand Rapids office off by about eight minutes from its Ann Arbor office.

As you can imagine, this was a hassle for train managers and passengers alike, and it would be an absolute nightmare to deal with using software. Because railroads found it completely unworkable, they helped standardize an alternative.

Timezones

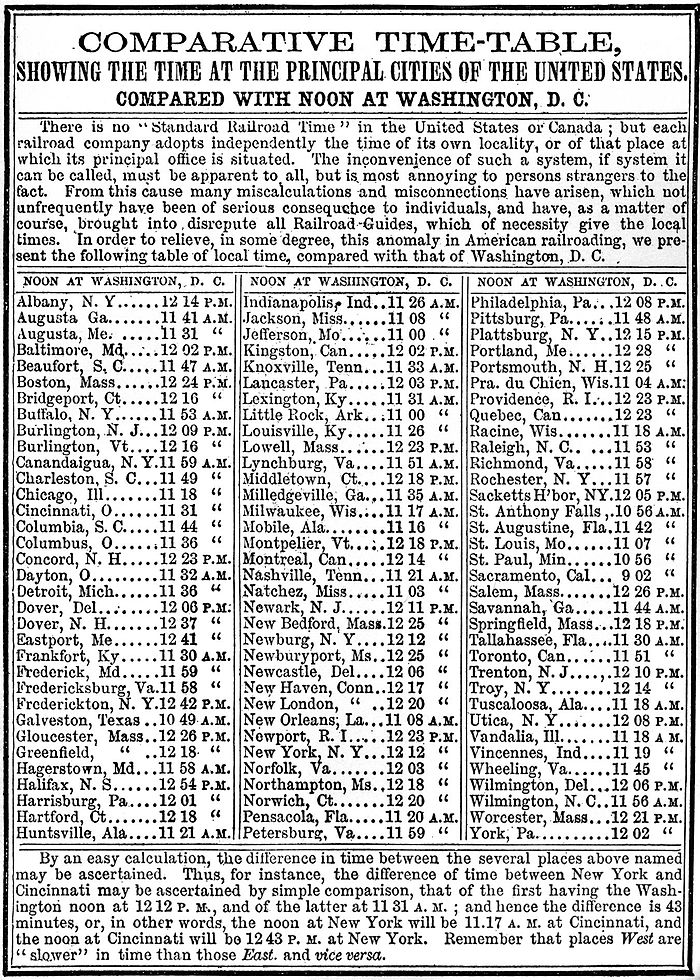

Railroads originally tried to solve the problem by coming up with a smaller number of timezones, but here, “small” means 50-100, which is still gruesome from the perspective of software development.

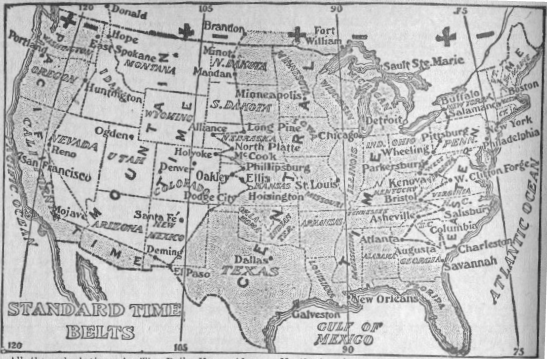

Thankfully, in 1883, they agreed upon five timezones across the US and Canada (Atlantic, Eastern, Central, Mountain, and Pacific), based on solar noon at 60, 75, 90, 105, and 120 degrees west of Greenwich, England.

With a sane number of timezones, countries like the US could coordinate to YYYY-MM-DD HH:mm across a country, thanks to standardization.

Well, mostly: Detroit refused this until 1900, then half-changed its mind to adopt EST, then changed its mind back…and finally got on board in 1905.

In 1884, the US hosted the International Meridian Conference, which established the Prime Meridian (running through Greenwich, England) and a set of timezones for the entire globe.

Seconds

Quartz

In addition to faster transportation of people, the age of industrialization also brought faster transportation of ideas! One such medium was radio in the 1920s.

A few decades earlier, in 1880, Jacques and Pierre Curie discovered piezoelectricity, a property of certain materials that causes the material to oscillate at a certain frequency when voltage is applied. By the early 1920s, it was discovered that quartz made a pretty reliable oscillator.

Radio was very popular in the 1920s, especially among amateur operators who bought quartz oscillators to control radio frequencies. Bell Labs and GE started doing advanced research into quartz, resulting in the development of quartz-crystal clocks and military applications like radar. While most crystal oscillators are now synthetic, they are ubiquitous in modern electronics.

At this point, machines were able to count in time, although it didn’t mean anything… yet.

Atomic clocks

While crystal oscillators continue to be used in electronics, they have long been surpassed in terms of timekeeping accuracy by the atomic clock (not to be confused with the Doomsday Clock). Atomic clocks use oscillation to keep track of time, but unlike crystal oscillators, they work via radioactive decay instead of piezoelectricity.

Since 1967, an SI second has been measured in terms of cycles of a caesium-133 atom oscillating between two energy levels. The NIST-F1 clock, built in 1999, is accurate to ~1 second per 20 million years.

Atomic clocks would later be used for satellite navigation systems like GPS and for determining UTC time.

UTC

The late 1960s saw the rise of UTC, which stands for “Coordinated Universal Time.” You may have noticed that that acronym should be “CUT.” The French term is “Temps Universel Coordonné,” or TUC. Since neither French- nor English-speaking officials would concede, a compromise of “UTC” was adopted.

UTC is a standard of timekeeping that is accurate to within a second, measured at the Prime Meridian. That means that UTC is effectively Greenwich Mean Time. It’s sometimes referred to as “Zulu time” because of the Z denoting a timezone offset of 0 (e.g. “00:00Z” being midnight in the UTC timezone). The NATO phonetic alphabet word for “Z” is “Zulu.” UTC is also responsible for handling leap seconds, which are time corrections required by the slowing of the Earth’s rotation.

Post-UTC, we can get the entire world (of people, not machines) coordinated to YYYY-MM-DD HH:mm:ss.

Unix time

What’s so special about January 1, 1970? Not a whole lot, actually. Unix time is a system for describing a point in time, measured in seconds, relative to 00:00 UTC on 01-01-1970, known as the “Unix epoch.” The Unix Programmer’s Manual, published in November 1971, marks the beginning of the epoch as Jan 1, 1971 (page 13), with the system time incrementing 60 times a second (60 Hz). System time was stored in a 32-bit variable, which would overflow within a few years at 60Hz (even at 1Hz, it has a “year 2038” problem).

At some point, the Unix epoch was moved back to 1970, and the frequency changed from 60Hz to 1Hz.

In 1988, everyone’s favorite ISO standard, ISO 8601, was created to standardize timestamp formatting and create human-readable times.

In the overall picture of timekeeping, Unix time means that we can agree on a meaning for all the counting that those crystal oscillators have been doing. It also means that machines can now keep track of time, independently.

Milliseconds

As the internet was created and then went mainstream, the need for better synchronization increased once again.

Network Time Protocol, or NTP, was designed in the early 1980s by David L. Mills as an “internet protocol used to synchronize the clocks of computers to some time reference.” Much like the independently operating towns of earlier decades, if a computer is working in isolation, it doesn’t matter what time it is. But as soon as you add computers and have distributed networks, timekeeping is necessary for them to act in a way that makes sense. Otherwise, you’d have messages arriving seemingly before they were sent, money withdrawn or deposited in the wrong order, and so on.

NTP allows a computer to regularly query an atomic clock (indirectly) for the time, and adjust to that time in a sane way. NTP also helps computers deal with those pesky leap seconds!

There’s also Precision Time Protocol, an IEEE standard that allows local systems to achieve accuracy down to the sub-millisecond range.

For a quick (10-minute) introduction to NTP, check out Joel Potischman’s !!Con talk from 2017.

From timezones to oscillators to NTP, we can now keep track of time on a global scale, with accuracy to the millisecond or better, across otherwise independent machines. Pretty neat!

While it might not make working with timestamps and multiple timezones any less of a headache, hopefully this puts some of the past in perspective.

https://twitter.com/mcclure111/status/425734652892958720

This is a nice summary of how hard time is, and why often the time-handling libraries for most major languages are so large.

Nice summary, Jaime! Even getting Adafruit exposure! You rock!

Thanks Tod! : )

Awesome article Jaime! I love the accurate gif evidence of quartz reacting to applied voltage

Thanks Scott!

Greece isn’t a protestant country. 90% of Greeks are Greek Orthodox, 3% of the population is made up of Protestants and other Christian religions, 4% of the population isn’t religious, 2% is Islam and the other 1% is made up of other religions.

hi Fae, you’re right. I had another country listed there, and then found that Greece switched over even later, but then didn’t update the rest of the sentence. I’ll fix that, thanks.

I keep coming back to this article to reference it or send it to people… really fascinating. Compellingly written too – thank you Jaime!

Thanks Stephen! : )