Article summary

Varnish Cache is a popular caching HTTP reverse proxy. Awhile back, I wrote about using nginx as a reverse proxy. But while nginx is great as a reverse proxy, it doesn’t perform caching. Caching can be highly desirable for a website or web application that needs to serve lots of static content. Most generic websites fall into this group, while more dynamic web applications may or may not benefit from caching.

Varnish sits in front of any HTTP compatible server, and it can be configured to selectively cache the contents. It will almost certainly deliver the cached content faster than the backend — especially if the requests ordinarily take some processing on the backend, such as to render or look up a resource on the filesystem or in a database. While the default storage mechanism for Varnish is backed by the filesystem, it can be configured to allocate storage with malloc using RAM to cache directly.

Default Behavior

There are a couple of important things to know about default behavior in Varnish:

- Varnish will automatically try to cache any requests that it handles, subject to exceptions:

- It will not cache requests which contain cookie headers or authorization headers.

- It will not cache requests which the backend response indicates should not be cached (e.g. Cache-Control: no-cache).

- It will only cache GET and HEAD requests.

- Varnish will cache a request for a default of 120 seconds. Depending on the type of requested resource, this may need to be adjusted.

- It will provide any Expires/Last-Modified/Cache-Control headers that it receives from the backend, unless specifically overwritten.

Subroutines

It is also important to know that requests routed through Varnish are handled by a variety of subroutines:

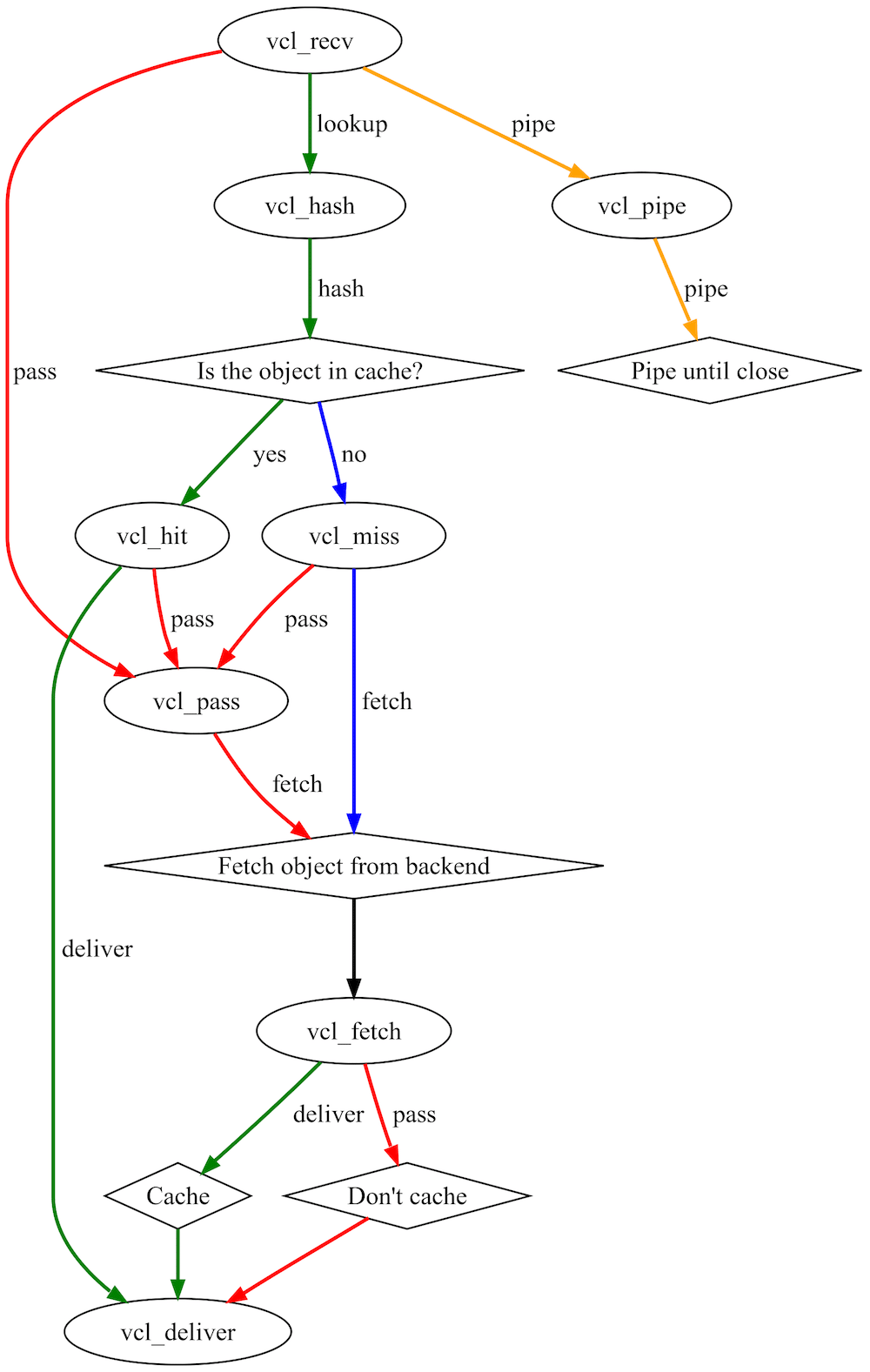

vcl_recv: Called at the beginning of a request. It decides whether or not to serve a request, whether or not to modify a request, and which backend to use, if applicable.vcl_hash: Called to create a hash of request data to identify the associated object in cache.vcl_pass: Called if pass mode is initiated. In pass mode, the current request is passed to the backend, and the response is similarly returned without being cached.vcl_hit: Called if the object for the request is in the cache.vcl_miss: Called if the object for the request is not in the cache.vcl_fetch: Called after a resource has been retrieved from the backend. It decides whether or not to cache the backend response as an object, how to do so, and whether or not to modify the object before caching.vcl_deliver: Called before a cached object is delivered to a client.vcl_pipe: Called if pipe mode is initiated. In pipe mode, requests for the current connection are passed unaltered directly to the backend, and responses are similarly returned without being cached, until the connection is closed.

Actions

Each subroutine is terminated by an action. Different actions are available depending on the subroutine. Generally, each action corresponds to a subroutine:

deliver: Insert the object into the cache (if it is cacheable). Flow will eventually pass tovcl_deliver.error: Return given error code to client.fetch: Retrieve requested object from the backend. Flow will eventually pass tovcl_deliver.hit_for_pass: Create a hit for pass object which caches the fact that this object should be passed. Flow will eventually reachvcl_deliver.lookup: Look up the requested object in the cache. Flow will eventually pass reach vclhit or vclmiss, depending on whether the object is in the cache.pass: Activate pass mode. Flow will eventually reachvcl_pass.pipe: Activate pipe mode. Flow will eventually reachvcl_pipe.hash: Create hash of request data, and lookup associated object in cache.

Traffic Flow

Configuring Varnish properly requires understanding how requests and responses flow through the various subroutines. See the diagram below to get a sense of how everything fits together. Subroutines are shown in bubbles, many functions (e.g. pass, fetch, deliver, etc.) are show besides connecting lines, and interactions with the backend and cache are shown in diamonds. For a more accurate, complete diagram, see the Varnish Wiki.

Need for Tweaks

Often, the default Varnish configuration works quite well. Generally, there are only a few reasons Varnish needs to be tweaked for your particular website or web application:

- When a request is received: rewriting a request, such as for removing cookies so the request can be served from the cache.

- When a response is returned from the backend: modifying a response, such as changing the ttl so that it can be cached.

- When a response is delivered: rewriting a response, such as overwriting backend headers so that browsers will cache content.

The Pattern

Note that the pattern is to make some tweaks, then allow the default Varnish behavior to take over. After executing your VCL code, default VCL code will be executed for the given subroutine. Unless you specifically handle or specify them, all situations, conditions and actions will fall through to default behavior. For example, if you provide VCL code for vcl_miss() to return a pass for some particular requests, but do not specify how remaining requests should be handled by fetch, the default VCL behavior will be to perform a fetch:

This is not necessary because the default behavior for vcl_miss() is to return a fetch.

sub vcl_miss{

if (req.request == "PURGE") {

error 404 "Not cached";

}else{

return (fetch);

}

}

This would be sufficient:

sub vcl_miss{

if (req.request == "PURGE") {

error 404 "Not cached";

}

}

Examples

Below are some Varnish Configuration Language (VCL) code snippets that implement some of the needed tweaks mentioned above. These are snippets that I currently use in the Varnish configurations that I manage (subject to some obfuscation, of course).

vcl_recv

Note: Default action if there are no cookies or authorization headers is to return lookup.

sub vcl_recv {

# Many requests contain Accept-Encoding HTTP headers. We standardize and remove these when unnecessary to make it easier to cache requests

if (req.http.Accept-Encoding) {

# If the request URL has any of these extensions, remove the Accept-Encoding header as it is meaningless

if (req.url ~ ".(gif|jpg|jpeg|swf|flv|mp3|mp4|pdf|ico|png|gz|tgz|bz2)(?.*|)$") {

remove req.http.Accept-Encoding;

# If the Accept-Encoding contains 'gzip' standardize it.

} elsif (req.http.Accept-Encoding ~ "gzip") {

set req.http.Accept-Encoding = "gzip";

# If the Accept-Encoding contains 'deflate' standardize it.

} elsif (req.http.Accept-Encoding ~ "deflate") {

set req.http.Accept-Encoding = "deflate";

# If the Accept-Encoding header isn't matched above, remove it.

} else {

remove req.http.Accept-Encoding;

}

}

# Many requests contain cookies on requests for resources which cookies don't matter -- such as static images or documents.

if (req.url ~ ".(gif|jpg|jpeg|swf|css|js|flv|mp3|mp4|pdf|ico|png)(?.*|)$") {

# Remove cookies from these resources, and remove any attached query strings.

unset req.http.cookie;

set req.url = regsub(req.url, "?.*$", "");

}

# Certain cookies (such as for Google Analytics) are client-side only, and don't matter to our web application.

if (req.http.cookie) {

# If a request contains cookies we care about, don't cache it (return pass).

if (req.http.cookie ~ "(mycookie1|important-cookie|myidentification-cookie)") {

return(pass);

} else {

# Otherwise, remove the cookie.

unset req.http.cookie;

}

}

}

vcl_fetch

Note: Default action if the content is cacheable is to return deliver.

sub vcl_fetch {

# If the URL is for our login page, we never want to cache the page itself.

if (req.url ~ "/login" || req.url ~ "preview=true") {

# But, we can cache the fact that we don't want this page cached (return hit_for_pass).

return (hit_for_pass);

}

# If the URL is for our non-admin pages, we always want them to be cached.

if ( ! (req.url ~ "(/admin|/login)") ) {

# Remove cookies...

unset beresp.http.set-cookie;

# Cache the page for 1 day

set beresp.ttl = 86400s;

# Remove existing Cache-Control headers...

remove beresp.http.Cache-Control;

# Set new Cache-Control headers for brwosers to store cache for 7 days

set beresp.http.Cache-Control = "public, max-age=604800";

}

# If the URL is for one of static images or documents, we always want them to be cached.

if (req.url ~ ".(gif|jpg|jpeg|swf|css|js|flv|mp3|mp4|pdf|ico|png)(?.*|)$") {

# Remove cookies...

unset beresp.http.set-cookie;

# Cache the page for 365 days.

set beresp.ttl = 365d;

# Remove existing Cache-Control headers...

remove beresp.http.Cache-Control;

# Set new Cache-Control headers for browser to store cache for 7 days

set beresp.http.Cache-Control = "public, max-age=604800";

}

}

vcl_deliver

Note: Default action is to return deliver, which actually delivers the response/object to the client.

sub vcl_deliver {

# Sometimes it's nice to see when content has been served from the cache.

if (obj.hits > 0) {

# If the object came from the cache, set an HTTP header to say so

set resp.http.X-Cache = "HIT";

} else {

set resp.http.X-Cache = "MISS";

}

# For security and asthetic reasons, remove some HTTP headers before final delivery...

remove resp.http.Server;

remove resp.http.X-Powered-By;

remove resp.http.Via;

remove resp.http.X-Varnish;

}

Conclusion

Varnish is an extremely powerful caching HTTP reverse proxy. By default, it behaves sensibly, and the general pattern for configuration is to make tweaks and allow the Varnish default behavior handle the rest.

Even though the configuration can seem intimidating (there are lots concepts to absorb and options to look over), the ability to make small tweaks without needing to completely rewrite behavior makes it manageable. The VCL wiki and book provide a wealth of information, including extremely detailed descriptions. These resources are often overwhelming because of the level of detail. I hope that this post will give you enough information to jump-start your understand of Varnish enough to be able to make use of Varnish and understand the full documentation.

Hi,

There is something we need to know about Varnish. Do you think the following WordPress settings might have a negative affect on ad impressions (which contain Javascript)?

/* SET THE HOST AND PORT OF WORDPRESS

* *********************************************************/

vcl 4.0;

import std;

backend default {

.host = “******”;

.port = “8080”;

.connect_timeout = 600s;

.first_byte_timeout = 600s;

.between_bytes_timeout = 600s;

.max_connections = 800;

}

# SET THE ALLOWED IP OF PURGE REQUESTS

# ##########################################################

acl purge {

“localhost”;

“127.0.0.1”;

}

#THE RECV FUNCTION

# ##########################################################

sub vcl_recv {

if (req.http.Host == “akillitelefon.com/forum” || req.url ~ “forum”) {

return (pass);

}

# set realIP by trimming CloudFlare IP which will be used for various checks

set req.http.X-Actual-IP = regsub(req.http.X-Forwarded-For, “[, ].*$”, “”);

# FORWARD THE IP OF THE REQUEST

if (req.restarts == 0) {

if (req.http.x-forwarded-for) {

set req.http.X-Forwarded-For =

req.http.X-Forwarded-For + “, ” + client.ip;

} else {

set req.http.X-Forwarded-For = client.ip;

}

}

# Purge request check sections for hash_always_miss, purge and ban

# BLOCK IF NOT IP is not in purge acl

# ##########################################################

# Enable smart refreshing using hash_always_miss

if (req.http.Cache-Control ~ “no-cache”) {

if (client.ip ~ purge || !std.ip(req.http.X-Actual-IP, “1.2.3.4”) ~ purge) {

set req.hash_always_miss = true;

}

}

if (req.method == “PURGE”) {

if (!client.ip ~ purge || !std.ip(req.http.X-Actual-IP, “1.2.3.4”) ~ purge) {

return(synth(405,”Not allowed.”));

}

return (purge);

}

if (req.method == “BAN”) {

# Same ACL check as above:

if (!client.ip ~ purge || !std.ip(req.http.X-Actual-IP, “1.2.3.4”) ~ purge) {

return(synth(403, “Not allowed.”));

}

ban(“req.http.host == ” + req.http.host +

” && req.url == ” + req.url);

# Throw a synthetic page so the

# request won’t go to the backend.

return(synth(200, “Ban added”));

}

# For Testing: If you want to test with Varnish passing (not caching) uncomment

# return( pass );

# FORWARD THE IP OF THE REQUEST

if (req.restarts == 0) {

if (req.http.x-forwarded-for) {

set req.http.X-Forwarded-For =

req.http.X-Forwarded-For + “, ” + client.ip;

} else {

set req.http.X-Forwarded-For = client.ip;

}

}

# DO NOT CACHE RSS FEED

if (req.url ~ “/feed(/)?”) {

return ( pass );

}

## Do not cache search results, comment these 3 lines if you do want to cache them

if (req.url ~ “/\?s\=”) {

return ( pass );

}

# CLEAN UP THE ENCODING HEADER.

# SET TO GZIP, DEFLATE, OR REMOVE ENTIRELY. WITH VARY ACCEPT-ENCODING

# VARNISH WILL CREATE SEPARATE CACHES FOR EACH

# DO NOT ACCEPT-ENCODING IMAGES, ZIPPED FILES, AUDIO, ETC.

# ##########################################################

if (req.http.Accept-Encoding) {

if (req.url ~ “\.(jpg|png|gif|gz|tgz|bz2|tbz|mp3|ogg)$”) {

# No point in compressing these

unset req.http.Accept-Encoding;

} elsif (req.http.Accept-Encoding ~ “gzip”) {

set req.http.Accept-Encoding = “gzip”;

} elsif (req.http.Accept-Encoding ~ “deflate”) {

set req.http.Accept-Encoding = “deflate”;

} else {

# unknown algorithm

unset req.http.Accept-Encoding;

}

}

# PIPE ALL NON-STANDARD REQUESTS

# ##########################################################

if (req.method != “GET” &&

req.method != “HEAD” &&

req.method != “PUT” &&

req.method != “POST” &&

req.method != “TRACE” &&

req.method != “OPTIONS” &&

req.method != “DELETE”) {

return (pipe);

}

# ONLY CACHE GET AND HEAD REQUESTS

# ##########################################################

if (req.method != “GET” && req.method != “HEAD”) {

return (pass);

}

# OPTIONAL: DO NOT CACHE LOGGED IN USERS (THIS OCCURS IN FETCH TOO, EITHER

# COMMENT OR UNCOMMENT BOTH

# ##########################################################

if ( req.http.cookie ~ “wordpress_logged_in” ) {

return( pass );

}

# IF THE REQUEST IS NOT FOR A PREVIEW, WP-ADMIN OR WP-LOGIN

# THEN UNSET THE COOKIES

# ##########################################################

if (!(req.url ~ “wp-(login|admin)”)

&& !(req.url ~ “&preview=true” )

){

unset req.http.cookie;

}

# IF BASIC AUTH IS ON THEN DO NOT CACHE

# ##########################################################

if (req.http.Authorization || req.http.Cookie) {

return (pass);

}

# IF YOU GET HERE THEN THIS REQUEST SHOULD BE CACHED

# ##########################################################

return (hash);

# This is for phpmyadmin

if (req.http.Host == “ki1.org”) {

return (pass);

}

if (req.http.Host == “mysql.ki1.org”) {

return (pass);

}

}

# HIT FUNCTION

# ##########################################################

sub vcl_hit {

# IF THIS IS A PURGE REQUEST THEN DO THE PURGE

# ##########################################################

if (req.method == “PURGE”) {

#

# This is now handled in vcl_recv.

#

# purge;

return (synth(200, “Purged.”));

}

return (deliver);

}

# MISS FUNCTION

# ##########################################################

sub vcl_miss {

if (req.method == “PURGE”) {

#

# This is now handled in vcl_recv.

#

# purge;

return (synth(200, “Purged.”));

}

return (fetch);

}

# FETCH FUNCTION

# ##########################################################

sub vcl_backend_response {

# I SET THE VARY TO ACCEPT-ENCODING, THIS OVERRIDES W3TC

# TENDANCY TO SET VARY USER-AGENT. YOU MAY OR MAY NOT WANT

# TO DO THIS

# ##########################################################

set beresp.http.Vary = “Accept-Encoding”;

# IF NOT WP-ADMIN THEN UNSET COOKIES AND SET THE AMOUNT OF

# TIME THIS PAGE WILL STAY CACHED (TTL)

# ##########################################################

if (!(bereq.url ~ “wp-(login|admin)|forum”) && !bereq.http.cookie ~ “wordpress_logged_in” && !bereq.http.host == “akillitelefon.com/forum” ) {

unset beresp.http.set-cookie;

set beresp.ttl = 52w;

# set beresp.grace =1w;

}

if (beresp.ttl 0) {

set resp.http.X-Cache = “HIT”;

# IF THIS IS A MISS RETURN THAT IN THE HEADER

# ##########################################################

} else {

set resp.http.X-Cache = “MISS”;

}

}

Example of the code:

We suspect if there is an error in the AdServer service but with the installation of Varnish, our impressions are incredibly diminished. Would Varnish have an impact on this matter?

Thanks.