It’s been almost a year since my posts on Excel workbook snapshot testing and PDF snapshot testing. Both of these have proven to be very valuable in our automated test suite and have allowed us to easily catch regressions, manually inspect the differences, and then update as needed.

Another task on my current project has allowed me to revisit this approach—this time for snapshot testing of the HTML files that we generate for inclusion in daily email reports. Since our use case is single-file HTML documents, the solution is quite simple and can be adapted from my earlier snapshot testing solutions.

Setup

I used a few Node modules to simplify the code. To install these Node modules on a Mac, we can use:

yarn add lodash diff colorsCode

First, as in my previous solutions, we’ll define the paths that we’ll use to store our expected and actual HTML files. The HTML files are stored in a sub-directory (__html-snapshots) so that they will be close to our test code and easy to find.

const reportPath = path.join(__dirname, '__html-snapshots');

const actualFileName = path.join(reportPath, 'actual.html');

const expectedFileName = path.join(reportPath, 'expected.html');In our case, we generate the HTML file in memory and push it into a database table which serves as our email queuing system. For the purposes of our test, we need a simple utility function to write the actual contents out to a file on disk for comparison with our expected file.

const writeActualHtmlFile = async (data: any, outputFilePath: string): Promise => {

const outputDirectoryPath = path.dirname(outputFilePath);

await mkdirIfNotExists(outputDirectoryPath);

return new Promise((resolve, reject) => {

fs.writeFile(outputFilePath, data, (err) => {

if (err) {

reject(err);

} else {

resolve();

}

});

});

}; Then, we’ll define a function to compare our newly generated (actual) HTML file against our expected HTML file. The actual comparison will be done later in a compareFiles function. When a particular file doesn’t match, we’ll output the differences in a color-coded fashion as we did before with our Excel differencing.

export const isHtmlEqual = (aFilePath: string, bFilePath: string) => {

const aFileContents = fs.readFileSync(aFilePath).toString();

const bFileContents = fs.readFileSync(bFilePath).toString();

const areFilesEqual = compareFiles(aFileContents, bFileContents);

if (!areFilesEqual) {

console.info('-------------------------------------------------------------------------------');

console.info(`${aFilePath} <==> ${bFilePath}:`);

console.info('-------------------------------------------------------------------------------');

const results = diff.diffLines(aFileContents, bFileContents);

for (const result of results) {

const formattedLine = colorFormatForFileDiff(result);

if (formattedLine) {

console.info(trimEnd(formattedLine));

}

}

console.info('-------------------------------------------------------------------------------');

console.info();

}

return areFilesEqual;

};Next, we’ll setup our comparison:

import * as _ from 'lodash';

import * as diff from 'diff';

const colors = require('colors');

// set up some reasonable colors

colors.setTheme({

filePath: 'grey',

unchanged: 'grey',

added: 'red',

removed: 'green',

});Then, we’ll define a very simple function to compare the actual and expected files as strings. In our case, the size of the files didn’t grow beyond the practical use of a simple string comparison.

const compareFiles = (aFileContents: string, bFileContents: string) => {

return aFileContents === bFileContents;

};

Finally, we’ll define a few functions to nicely format the differences for humans to read.

const colorFormat = (result: diff.IDiffResult): string => {

const value = result.value;

if (result.added) {

return colors.added(formattedLine(value, '-'));

}

if (result.removed) {

return colors.removed(formattedLine(value, '+'));

}

return colors.unchanged(formattedLine(value, ' '));

};

const formattedLine = (line: string, prefix: string): string => addLinePrefix(line, prefix);

const addLinePrefix = (line: string, prefix: string): string => {

return trimEnd(line)

.split(/(\r\n|\n|\r)/)

.filter((segment) => segment.trim().length > 0)

.map((segment) => `${prefix} ${trimEnd(segment)}`)

.join('\n');

};

const trimEnd = (text: string) => {

return text.replace(/[\s\uFEFF\xA0]+$/g, '');

};Now, we’ll define a snapshot function which operates as we did with our Excel and PDF testing:

- When the expected HTML file does not exist, we will simply copy the actual HTML file onto the expected HTML file and pass the test.

- When the expected HTML file does exist, we will compare it against the actual HTML file and issue an error if they do not match.

When the expected and actual HTML do not match, we can do a manual inspection. When we are satisfied, we can rerun the test with the UPDATE environment variable set. This will overwrite the expected HTML file with the actual HTML file and pass the test.

export const snapshot = async (actualFilePath: string, expectedFilePath: string) => {

if (process.env.UPDATE || !(await exists(expectedFilePath))) {

await copyFile(actualFilePath, expectedFilePath);

} else {

const helpText = [

'',

'-------------------------------------------------------',

`Actual contents of HTML file did not match expected contents.`,

`Expected: ${expectedFilePath}`,

`Actual: ${actualFilePath}`,

'-------------------------------------------------------',

'',

].join('\n');

const isDocumentEqual = await isHtmlEqual(expectedFilePath, actualFilePath);

if (!isDocumentEqual) {

console.error(helpText);

}

return expect(isDocumentEqual, 'HTML documents are not equal').to.be.true;

}

};Finally, we’ll write a simple test which exercises this method.

describe('HTML Files', () => {

it('can generate an HTML file', async () => {

// generate test data

generateTestData();

// fetch actual daily email report

const actualHtml = await getDailyEmailReport();

// write the email to our expected path.

await writeActualHtmlFile(actualHtml, actualFilePath);

// compare snapshot of actual and expected HTML files.

await snapshot();

});

});Execution

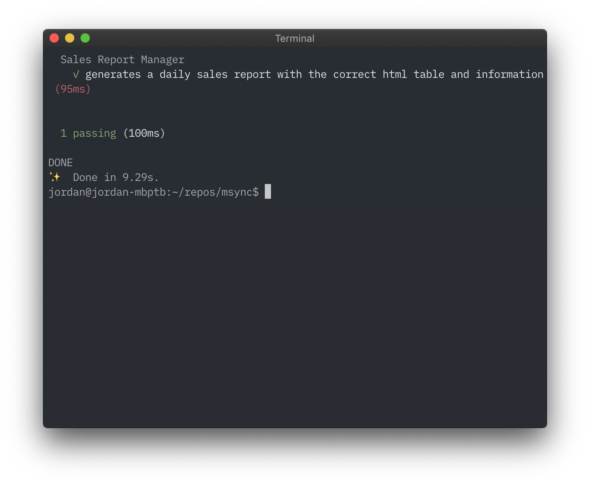

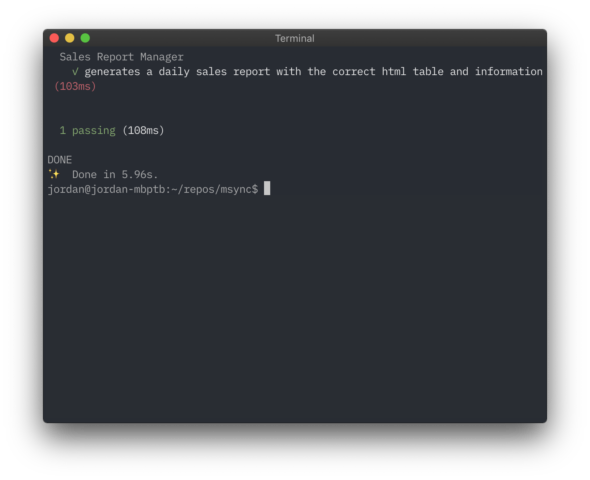

First, we’ll run the test.

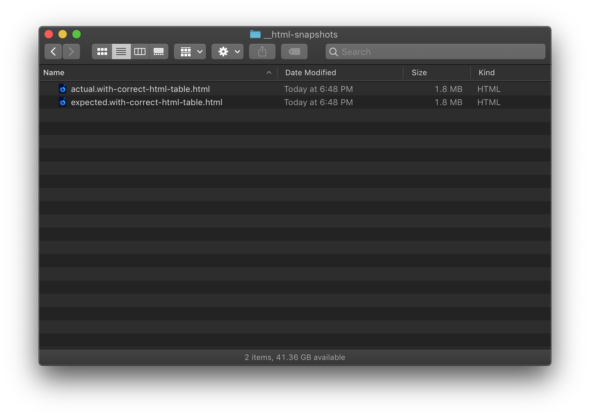

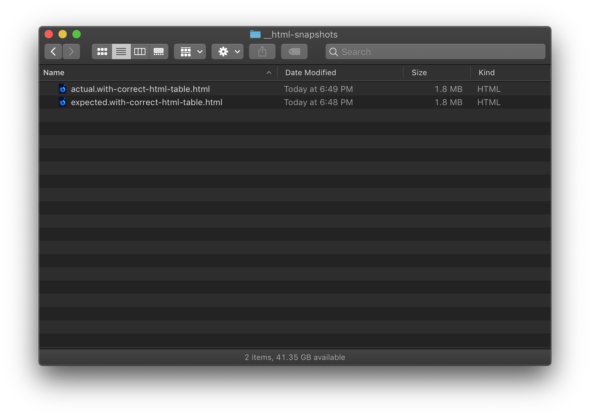

We can see that both the actual and expected HTML files have the same timestamp.

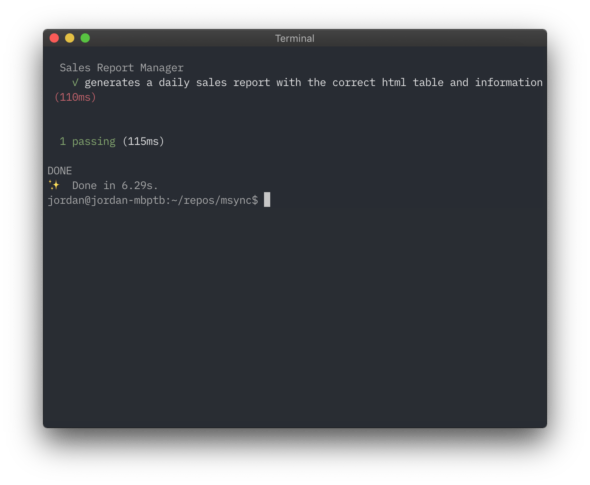

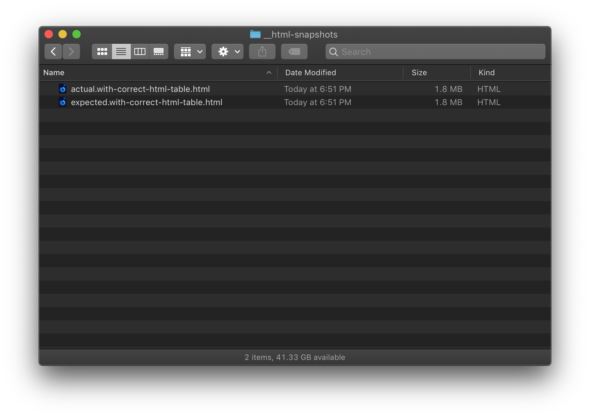

Next, we’ll run our test again to see that only the actual HTML file has been updated.

We can see that the timestamp for the actual HTML file has changed, but the expected HTML file hasn’t.

Then, we’ll modify our implementation and re-run our test.

We can see that our test detected a change between the actual and expected HTML files and reported it as a test failure.

At this point, we will manually inspect the expected HTML file and actual HTML file to visually compare the two.

If, after manually inspecting the expected and actual HTML file, we find that these changes are acceptable, we can simply re-run our test with the UPDATE environment variable set.

Finally, we can see that the timestamp of the expected HTML file is updated.

We can add this new expected HTML file to our repo and commit. If we are using a continuous integration environment, we will automatically see a test failure when the actual output differs from the expected output.

And that’s all there is to it!