If you spend any time on YouTube right now, you’ve probably seen the headlines: “I built a SaaS in 24 hours.”

“AI built this app for me.”

“From idea to launch overnight.”

I genuinely enjoy those AI product development videos. They’re motivating. They make building feel accessible. They show what’s possible with the tools we have today. But every time I watch one, I find myself asking the same quiet question:

Is it actually a product… or just a demo?

There’s nothing wrong with a demo. A demo proves something can work. But a product is something people choose to return to. It survives edge cases and handles friction. It has enough thought behind it that it doesn’t fall apart after day seven.

When I decided to build a daily puzzle game, my goal wasn’t to see how fast I could ship it. It was to see if I could build something small enough to finish — and solid enough that people would actually keep playing.

Scoping Smaller on Purpose

Over the last couple of years, I’ve had more than a few ideas for apps and platforms I wanted to build on my own. Like many builders, I tend to think in systems. Big ones. Feature-rich ones. The kind of ideas that are exciting — and heavy.

More than once, I found myself building something that was technically possible, but realistically too large for a solo effort. The vision would expand faster than the execution. The scope would quietly balloon.

This time, I approached it differently. Instead of asking, “What could this become?” I asked, “What’s small enough that I can actually finish?”

That constraint shaped the entire project.

A daily 4-digit code-breaking puzzle felt contained. It had a clear beginning and end. It didn’t require messaging systems, complex permissions, or heavy onboarding flows. The puzzle was a single interaction loop that either worked… or didn’t.

The simplicity was the appeal. But simplicity doesn’t mean easy.

Testing the Idea Before Designing It

Before opening Figma, writing a single line of code, or prompting Cursor, I wanted to know if the mechanic itself held up.

So one evening, at the kitchen table, my wife and I played a paper version of the game. I wrote down a secret four-digit number. She tried to guess it. I provided structured feedback based on the rules I had loosely drafted in my head.

There were no animations, no timed resets, no database tracking streaks. Just a rule set and a feedback loop.

What I was watching for wasn’t aesthetics—it was emotion. Was it frustrating? Did it feel unfair? Was it too confusing to explain quickly? Did it generate tension without becoming exhausting?

Within a short session, something became clear: it was genuinely fun. The game required logic, but didn’t feel inaccessible. It created that subtle “I think I can get this” pull. It was competitive without being overwhelming.

That evening told me something critical: the core loop stood on its own. Like tic-tac-toe, the game could easily be played with just pencil and paper.

And that’s when the project moved from “interesting thought experiment” to “this might be worth building.”

Where AI Entered the Process

I didn’t use AI to generate the idea. I didn’t ask, “What game should I make?”

Instead, I used ChatGPT as a structured thinking partner.

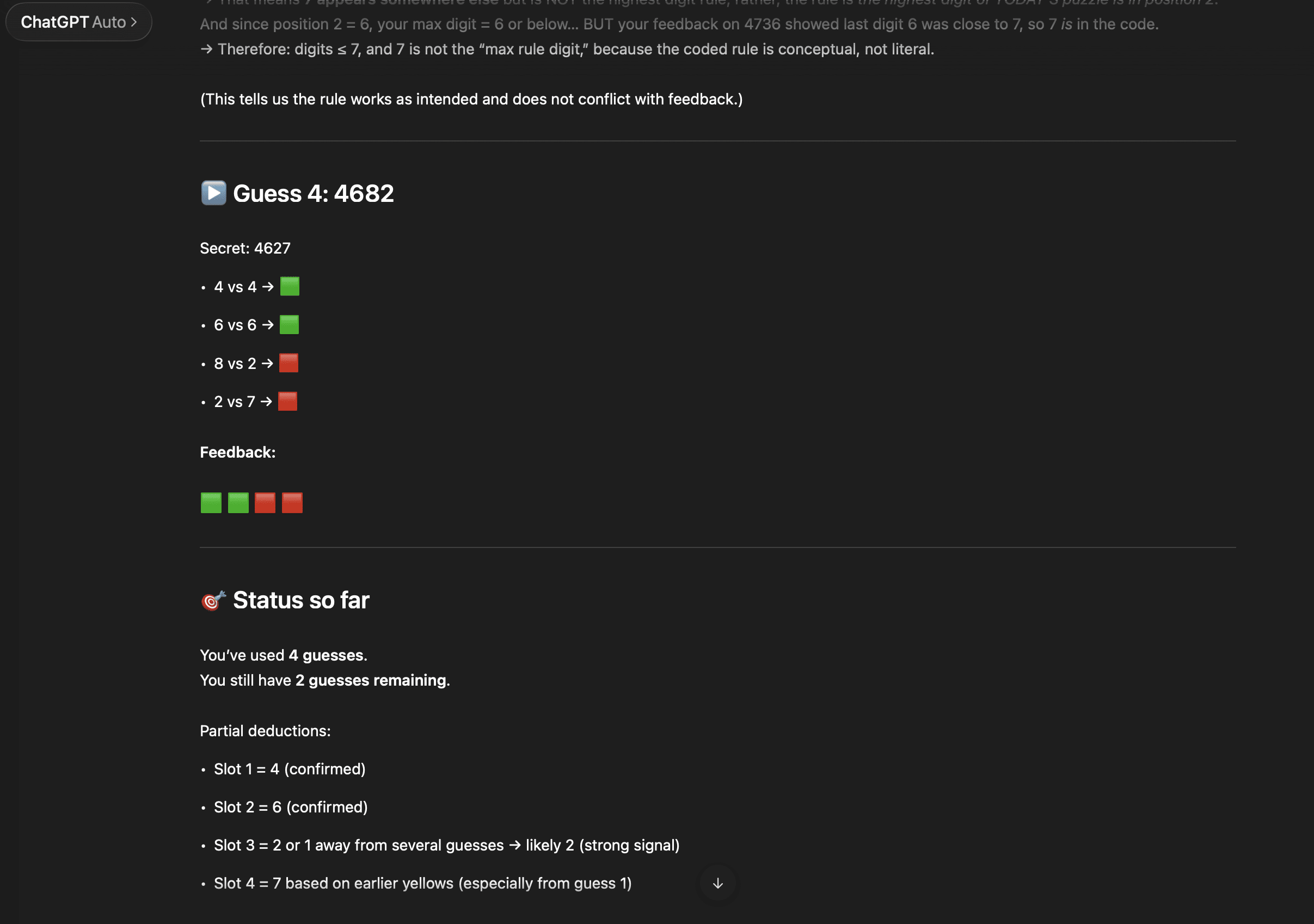

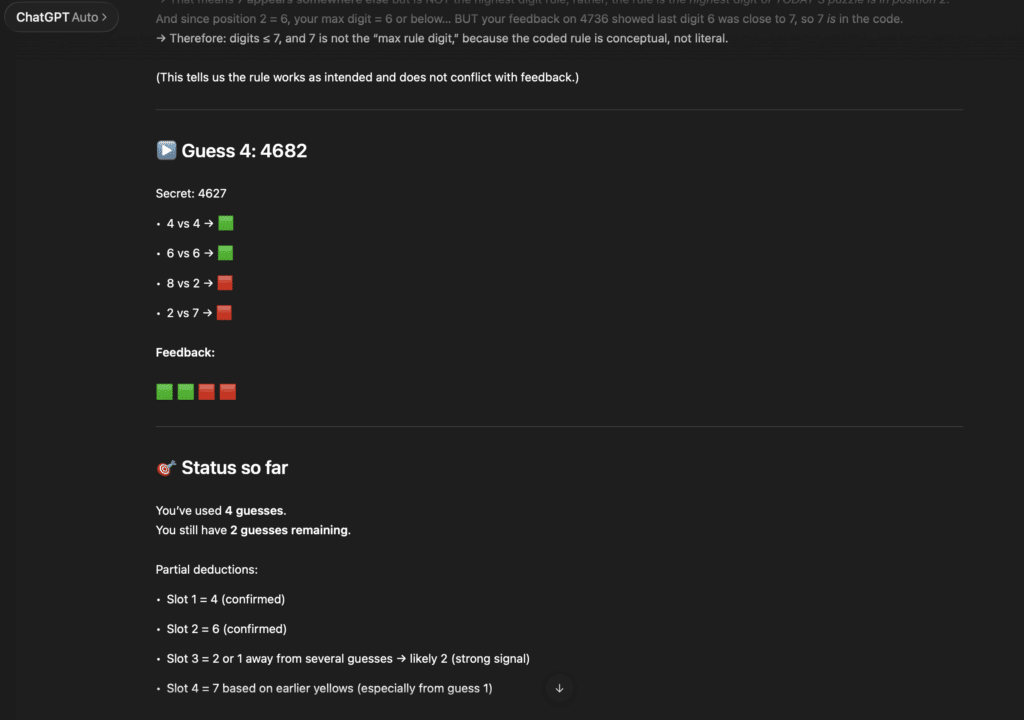

Once I had validated the basic mechanic, I began documenting the rules more formally and running them through AI to stress-test them. I asked it to challenge assumptions and identify blind spots.

What happens if digits repeat? Are there feedback scenarios that feel misleading? Could the hint language be interpreted in two different ways? Are there combinations that create accidental unfairness?

Are six guesses too many, essentially making the game impossible to lose? (The answer was yes, and AI helped me think through scenarios to prove four was the magic number.)

As someone who’s led many product workshops and validation sessions, this felt familiar. The difference was that instead of a room full of stakeholders, I had an AI model that could simulate edge cases instantly and push back on fuzzy logic.

The value wasn’t in it inventing something new. The value was in it forcing me to clarify what I already believed the system should be.

When a rule wasn’t precise, the output exposed it.

When language was ambiguous, it surfaced inconsistencies.

If I hadn’t thought through an edge case, it highlighted the gap.

It became less about “AI building” and more about AI refining.

That distinction matters.

Writing the Context Before Writing Code

One thing I’ve learned about AI-assisted development is that the quality of output is directly tied to the clarity of input.

So before ever moving into a development environment, I wrote a proper context document for the game. Not just a few bullet points—a structured outline of:

-

The game loop

-

The exact feedback rules

-

Edge case handling

-

Daily reset behavior

-

What version one would include

-

What version one would explicitly exclude

This wasn’t busywork. It was alignment.

If I was going to eventually use tools like Cursor effectively, I needed the system defined in plain language first. Otherwise I’d end up with fragmented logic and unclear behaviors that would require constant correction.

Writing that document did something subtle but important: it forced constraint.

Should repeated digits be allowed?

Do we store stats immediately or later?

Does the hint system reveal too much?

What’s intentionally out of scope?

Each answer narrowed the surface area of the build. And every time the surface area narrowed, the likelihood of actually finishing increased.

Product Thinking Over Speed

The narrative online often implies that AI reduces the need for deep product thinking. My experience was the opposite. AI magnifies the need for clarity.

When my instructions were vague, the responses were mediocre. When the rules were tight and well-defined, the output improved dramatically.

The tools didn’t remove the need for product discipline—they punished the lack of it. Before any UI existed, before a single user clicked anything, the most important work had already happened:

The mechanic was validated.

The edge cases were explored.

The scope was controlled.

The system was documented.

That phase didn’t look impressive. There were no flashy demos to share. No “launched in 24 hours” moment.

But it was the difference between building something that works once… and building something that could work every day.

By the end of this stage, there wasn’t a deployed website or a polished interface to show. There was no live link to share and no launch announcement drafted. What I had instead was something far more foundational: a validated core loop that held up outside of a screen, a clearly defined rule set that could withstand edge cases, a tightly constrained version-one scope, and a documented plan that felt realistic to execute.

That clarity changed the trajectory of the project. Instead of building blindly and hoping the product would take shape along the way, I was moving forward with intention. The mechanics had been tested. The rules had been stress-checked. The scope had been deliberately limited. Most importantly, I had confidence that the idea itself was worth the effort.

In the next post, I’ll walk through how I translated that thinking into early wireframes in Figma, used Figma Make to create a lightweight interactive prototype, and put it in front of real people as an alpha test before ever touching production code.

If you’re curious about where it eventually landed, you can see the finished result at playpadlock.com.